Ensuring the security of industrial control systems (ICS) can be a daunting and complex task, requiring expertise and experience in several areas from a variety of contributors. The rapid pace of improvements to both technology and practice adds to the challenge. Finally, the amount and complexity of information, guidance and advice that is available can easily become overwhelming.

Rather than focusing on the details about security products and technology, an approach from an engineering perspective is necessary — concentrating first on why security is important, and then moving on to the roles and responsibilities involved in an effective response. This article provides practical guidance on how to address this challenge.

While the ultimate accountability for the security of these systems lies with the owner-operator, successfully addressing the subject requires a collaborative approach that includes contributions from several stakeholders. Understanding, agreeing on and coordinating the respective responsibilities of these contributors is actually more important than any specific technology or method.

While accountable, the owner-operator need not feel alone. Specific responsibilities can be assigned or delegated to others who have specific experience or expertise. The most important message is that this is a challenge that cannot be ignored. Inaction is simply not an acceptable response.

IT and OT

Both information systems and ICS security have received significant attention over the past several years. Much has been written on the subject, including articles on the subject that have appeared in the pages of this magazine [ 1–3]. There is considerable discussion and debate within the security community about the degree to which ICS security is similar to or distinct from more traditional information systems security. Recently this has led to the common use of the terms information technology (IT) and operations technology (OT) to describe these two areas of attention. Regardless of the specific terms used, there is general agreement that the technology is evolving rapidly; perhaps too rapidly for all but the most dedicated specialists to track.

Engineering and operations

Throughout all of this debate it is not clear that there has been sufficient involvement from the engineering and operations communities. Given the rapid pace of change and the sometimes confusing or arcane nature of the subject, what is the typical process or operations engineer to do about security? They are often the most direct representatives of the owner-operator, who are expected to address the accountability previously mentioned.

Changing the conversation

The current state of affairs requires a change in tone and context of the conversation about cybersecurity. Typically much of the security-related dialog is concentrated on protecting and acting on “the system” or “the network.” The reality is that this approach is often too abstract when applied to industrial systems. Why should a design or operations engineer care about these abstract terms, when they face more pressing and tangible challenges and problems on a daily basis? It is not primarily about protecting the system, the network or the data. It is about the potential implications of not protecting them.

An informed and balanced assessment of these implications has to be the first step in this refocused conversation. The people who are the most experienced in the design and operation of the process or equipment have the best understanding of what those implications may be. It is important that they consider a breach in security to be another possible source of failure or compromise, just like equipment failure, uncontrolled reactions or other factors. With this concept, what has been framed as a security opportunity now becomes an engineering opportunity.

The challenge

A realistic assessment of implications in no way changes the technical nature of the challenge. Securing the systems, networks and data provides a means to an end, and that end is the safety, availability and reliability of the underlying process or equipment. Compromising the security of these system components can result in negative impacts in the physical world. Even in situations where ICS elements (such as a safety instrumented system) are physically isolated and not connected to a network, it is still possible for poor practices to lead to security compromise, which can in turn affect plant or process operations.

Risk is real

The reality of this type of scenario has been demonstrated in both simulations and real incidents. Although companies are often reluctant to publicly discuss such incidents, those with experience in this area know that they have occurred. Discussions of this subject inevitably lead to a debate about exactly what constitutes a “cyber incident.” Some have taken the position that only deliberately malicious acts should be considered, while others have argued that inadvertent acts must also be included. In fact, this distinction is largely artificial and not particularly important. What is important to the owner-operator company is a realistic assessment of risk to its operation.

Basic elements

Before such a risk assessment can be conducted, it is necessary to have an understanding of certain basic elements of ICS security, taking into account the implications for both design and operation of industrial operations. This assessment does not require a great deal of complicated or technical analysis, but it is helpful to have an appreciation of a few important concepts.

Lifecycles

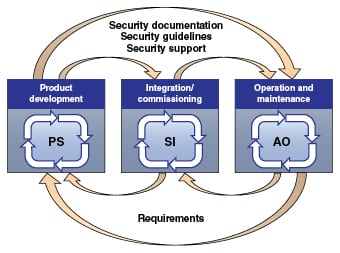

Perhaps the most fundamental of these concepts is that of the lifecycle of the industrial control system. Several views of this lifecycle have been proposed and developed. One of these is being developed for use in international standards on this subject. This model is shown in Figure 1.*

As seen in Figure 1, this model describes three interconnected processes that correspond to the phases of the solution lifecycle, as well as to the stakeholder groups that have the primary responsibility for each.

• The product supplier (PS) has primary responsibility for the product development lifecycle, which must include security in the design of the individual products

• The integration or commissioning lifecycle combines a variety of products to create the overall control solution. This phase is primarily the responsibility of the system integrator (SI)

• The operation and maintenance lifecycle is the principal focus of the asset owner (AO) or owner-operator (for the purpose of this discussion, the terms owner-operator and asset owner are considered to be synonymous)

As shown in the model, these lifecycles are connected through the sharing of system and support documentation, as well as requirements.

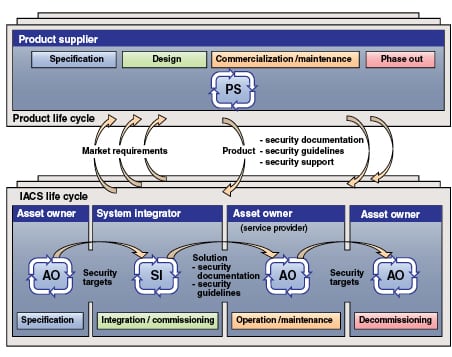

A different view (also under development for use in standards) is shown in Figure 2.* In this case, the focus is on how the development of products is related to the ownership and operation of the resulting systems.

The phases of ownership and operation range from initial specification, through to eventual decommissioning and removal or replacement. Through all phases of this cycle, it is essential to have a realistic assessment of the risk associated with a cyber-related incident, where risk is generally considered to be a function of threat, vulnerability and consequence. Each of these factors has to be considered separately.

The owner-operator is either accountable or directly responsible for activities in each of these phases. Response to security challenges typically takes the form of a cybersecurity management system (CSMS).

Industry standards

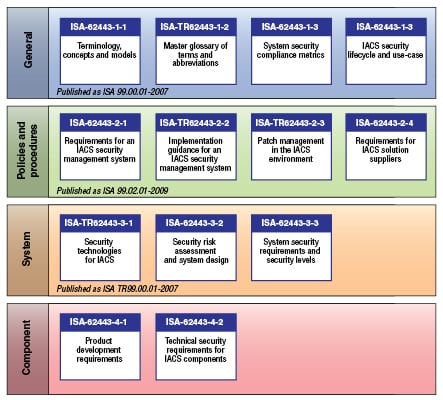

Industry standards represent accepted conventions and practices with respect to a particular subject. In the case of ICS security, they are the ISA-62443 [ 4] series of standards under development by the ISA99 committee of the International Society for Automation (ISA) and the International Electrotechnical Commission (IEC). An overview of this series is shown in Figure 3.

The ISA-62443 standards are purposely structured in such a way that the primary focus for the owner-operator community will be on the top two levels in the above-mentioned model. For example, the ISA-62443-2-1 standard provides specific direction on how to structure a cybersecurity management system that complements similar practices for general-purpose IT systems.

The standards in the two lower levels of Figure 3 are directed primarily to those who develop and assemble industrial control systems.

Product certification

The owner-operator requires a reliable means of determining whether specific control systems and components adhere to standards such as ISA-62443. This is accomplished through formal programs, such as ISASecure** [ 5] that certify these products as being compliant to a set of formal specifications taken from the standards.

Although products that are certified as compliant provide the basic building blocks for security, a complete response by the owner-operator also requires more practical guidance and assistance.

NIST Framework

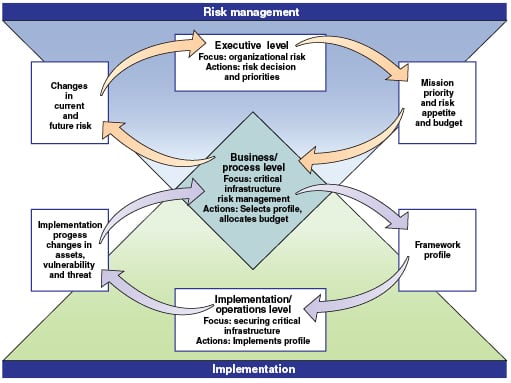

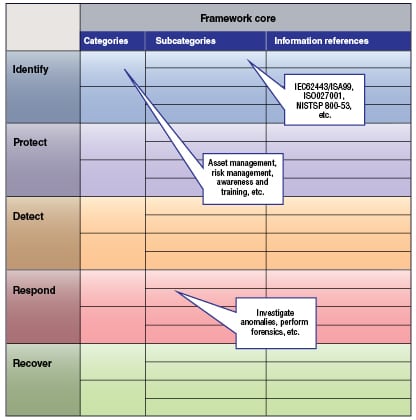

In the U.S., an important recent development in cybersecurity has been the creation of the NIST (National Institute of Standards and Technology) Cybersecurity Framework [ 6]. Developed in response to Presidential Order 13636, this framework provides the owner-operator with a specific approach to the development of a comprehensive cybersecurity program. Figure 4 shows a view of the information flows required to address cybersecurity, as described by the framework.

The NIST Framework consists of the following elements:

• The Framework Core

• The Framework Profile

• The Framework Implementation Tiers

The Framework Core (Figure 5) describes the set of activities that an organization should perform as part of a cybersecurity management system. Starting with five functions — identify, protect, detect, respond and recover — the Framework Core is divided into categories (such as asset management, risk management, awareness and training) and subcategories (such as investigate anomalies and perform forensics). References to sector, national or international standard requirements, or clauses are then listed within these subcategories.

Although the scope of application for the framework includes all aspects of cybersecurity in the critical infrastructure, it also has applicability to the narrower scope of industrial control systems security. The ISA-62443 series of standards are prominent references in this area.

Additional resources

Additional information, including sector, industry and international standards, as well as technology, product- and sector-specific guidance is available from many sources. There are also many training programs offered. Several resources are available to assist and guide the owner-operator in planning and executing an effective cybersecurity program. The irony is that the problem is not a lack of information, but an over-abundance of it. The result is often confusion and uncertainty about where and how to begin.

If a company is a member of an industry trade association, there may be guidance available from that source. For example, members of the American Chemistry Council (ACC; Washington, D.C.; www.americanchemistry.com) have access to sector-specific guidance, as well as vehicles such as the Responsible Care Security Code [ 7]. Professional associations, such as the Institute of Electrical and Electronics Engineers (IEEE; Washington, D.C.; www.ieee.org) or the International Society for Automation (ISA; Research Triangle Park, N.C.; www.isa.org) [ 8] also have information available in the form of standards, practices and training.

Once the references, guidance and training resources have been identified, the owner-operators must assemble the components of their cybersecurity program. As mentioned earlier, the first step is to accept this accountability and acknowledge the opportunity. If management support is required, it is useful to explain the potential implications of a security incident in terms of impact on the process or business.

Threat information

Information about possible threats often comes in the form of anecdotal reports of attacks or incidents that have occurred elsewhere. While some may have very specific relevance to industrial control systems (such as Stuxnet and Shamoon), others are much more generic. Sources for this information include security companies and perhaps government sources such as ICS-CERT (The Industrial Control Systems Cyber Emergency Response Team) [ 9].

Vulnerabilities

Sources for information on vulnerabilities include suppliers of the systems and component software, as well as reporting sources, such as ICS-CERT. In many cases, vulnerabilities are not disclosed until a patch or some other mitigation is available. However, this is not a universal practice. It is for this reason that it is important to maintain regular communications with systems and technology providers.

Consequences

The consequence component should receive considerable attention from the owner-operators, as they are in the best position to understand it. The best source of this type of information is most often internal, since it will be those who have designed and operated the plants or processes who best understand the possible consequences in areas such as safety, reliability, availability and product quality. Recall from the above discussion that a cyber incident can just as easily lead to consequences similar to those of a more-expected physical failure, particularly in highly automated operations.

When considering these consequences it is important to be realistic. Far too often when this topic is discussed in the context of industrial control systems, there is a tendency to lapse into hyperbole and to talk about explosions, release of noxious materials and other catastrophic events. While these events may in fact be possible, they are probably not likely. Industrial processes have long been designed with these possibilities in mind, and mitigating measures have already been taken in both design and operation.

When talking about the importance of security, dwelling on such scenarios in conversations with experienced operations people often has the result that they simply tune out the discussion. Engaging the engineering community requires that the conversation take place in a context that they can relate to.

Creating the program

The best choice of a starting point depends somewhat on the individual situation. In some companies there may already be corporate programs in place that address a portion of the subject. Assistance is also available from a variety of external sources. In either case, it is important to remember that effective ICS security requires a multi-disciplinary approach, drawing on expertise in security, engineering and operations.

Basic steps

Regardless of the details of a specific process, the basic steps for creating a program can be expressed as follows:

1. Identify contributors (Plan)

2. Establish the scope of interest (Plan)

3. Acquire or develop an inventory of systems within scope (Do)

4. Conduct an assessment of the current state of security (Check)

5. Establish and execute a plan to improve performance (Act)

Contributors

Many of us have received advice to avoid allowing the business IT function to be responsible for industrial cybersecurity. However, rather than ignoring this source of expertise or building barriers to its involvement, a better approach is to reach out to IT professionals to form a partnership. Much of their technology and security-related expertise does have value in this situation, as long as it is carefully applied, with a full understanding of the operational characteristics and constraints.

Effective methods of establishing this partnership could easily be the subject of an entirely separate discussion, but any such relationship has to begin with a description of the shared vision or intent, and an open and frank exchange of wants and offers. What does each party hope to gain through the relationship, and what do they bring to it?

Establish scope

The first task is to assemble an accurate inventory, describing the systems and components that are considered to be in scope for the program. This inventory can be assembled using a variety of criteria, as described in the ISA-62443 Standards. These criteria include the following:

Functionality included. The scope can be described in terms of the range of functionality within an organization’s information and automation systems. This functionality is typically described in terms of one or more models.

Systems and interfaces. It is also possible to describe the scope in terms of connectivity to associated systems. A comprehensive program must include systems that can affect or influence the safe, secure and reliable operation of industrial processes.

Included activities. A system should be considered to be within scope if the activity it performs is necessary for any of the following:

• predictable process operation

• process or personnel safety

• process reliability or availability

• process efficiency

• process operability

• product quality

• environmental protection

• compliance with relevant regulations

• product sales or custody transfer affecting or influencing industrial processes

Asset-based. The scope should include assets that meet any of the following criteria, or whose security is essential to the protection of another asset that does any of the following:

• is necessary to maintain the economic value of a manufacturing or operating process

• performs a function necessary to the operation of a manufacturing or operating process

• represents intellectual property of a manufacturing or operating process

• is necessary to operate and maintain security for a manufacturing or operating process

• is necessary to protect personnel, contractors and visitors involved in a manufacturing or operating process

• is necessary to protect the environment

• is necessary to protect the public from events caused by a manufacturing or operating process

• is a legal requirement, especially for security purposes, of a manufacturing or operating process

• is needed for disaster recovery

• is needed for logging security events

Consequence-based. Scope definition should include an assessment of what could go wrong and where, to disrupt operations, what is the likelihood that a cyber-attack could initiate such a disruption, and what are the consequences that could result. The output from this determination shall include sufficient information to help the ordinary user (not necessarily security-conscious ones) to identify and determine the relevant security properties.

Inventory

Having considered the above criteria to define the focus or scope of the cybersecurity response, the next task is to develop an inventory of the systems, components and technologies that are included in scope. This is the “Do” step. Sources of such information include design and maintenance documents for the control systems and connecting networks.

In cases where the configuration has evolved over time, it may be necessary to confirm and supplement the records by physically surveying the installation. It may be necessary for this process to be repeated several times, adding more detail each time. Although this can be a tedious process, it is essential to have an accurate description of the system to be protected.

An accurate and current inventory can only be prepared with involvement of the owner-operators, since they are most familiar with the installation. In this case, the owner-operator is both accountable and responsible.

It is important to take note of the potential for using some sort of automated or programmable scanning as a means of identifying systems and devices. Tools such as this should be applied very carefully, as there is a very real potential for such scans to disrupt normal operations.

Assessment

With a thorough understanding and appreciation of the systems to be protected, attention now moves to the assessment phase. This is the “Check” step. It is at this point that the state of system components are compared to expectations drawn from sources, such as standards and recommended practices.

The important choice here is the exact standards or measures against which to measure performance. To some extent, this choice depends on factors such as sector or industry affiliation. For example, facilities that are part of the U.S. chemical sector may be expected to demonstrate compliance with the Chemical Facility Anti-Terrorism Standards (CFATS) [ 10] or the cyber-related elements of the Responsible Care Security Code.

Similar expectations exist for other sectors. Assistance in selecting appropriate assessment standards is available from several sources. Several of these have been developed and are readily available from the U.S. Department of Homeland Security (DHS; www.dhs.gov).

One of the more recently available tools is called a Cyber Resilience Review (CRR) [ 11]. It can be used for a “non-technical assessment to evaluate an organization’s operational resilience and cybersecurity practices.” Intended to be applied to all aspects of a company’s security program, this tool has some relevance for ICS security. The expectation is that it would be applied at a corporate level.

Improvement plan

Regardless of the method or approach used to complete the assessment, the results will have little value if they are not used to make improvements. A common approach is to develop a “multi-generation” plan that characterizes each of the required improvement steps or tasks as short-, medium- or long-term. Regardless of the specific method used, it is essential to address the findings of the assessment in order to make regular improvements in the state of control systems security.

Final thoughts

Addressing the cybersecurity of industrial control systems can be a daunting task, but it is not one that can be ignored. Although the complex and arcane nature of the subject can tempt design and operations engineers to dismiss it as an “IT problem”, the reality is that accountability for the protected systems most often lies with engineering and operations.

A collaborative response, beginning with a realistic assessment of potential implications and consequences, is needed to develop an effective ICS cybersecurity management system.

* Figures 1 and 2 are unpublished submissions to the ISA99 Committee on Industrial Automation and Control Systems Security by R. Schierholz and P. Kobes

** ISASecure is a trademark of the Automation Standards Compliance Institute

References

1. Ginter, Andrew, Securing Industrial Control Systems, Chem. Eng., pp. 30–35, July 2013.

2. Lozowski, Dorothy, Chemical Plant Security: Gating more than the perimeter, Chem. Eng., pp. 18–22, September 2012.

3. Ginter, Andrew, Sikora, Walter, Cybersecurity for Chemical Engineers, Chem. Eng., pp. 49–53, June 2011

4. en.wikipedia.org/wiki/cyber_security_standards

8. www.isa.org

Author

Eric C. Cosman is an Operations IT consultant with The Dow Chemical Company (Michigan Operations, Midland, MI, 48667; Email: [email protected]). He specializes in the application of information technology to all areas of manufacturing and engineering, including the definition of policies and practices, system and technical architecture, and technology management and integration planning processes for manufacturing and engineering systems. With over thirty years of experience in areas including process engineering, process systems software development, telecommunications, IT operations, architecture definition and consulting, he has also held leadership roles in various industry groups, standards committees, focus groups and advisory panels. Cosman is an industry leader in the area of industrial systems cybersecurity, with a specific emphasis on the implications for manufacturing operations. He is a founding member and the current co-chairman of the ISA99 committee on industrial automation and control systems security and the vice president of standards and practices at the International Society for Automation (ISA).